Dear fans and followers of These Vibes Are Too Cosmic:

It is wonderful to see continued engagement with our content on this site! I am looking over our posts several years later, having seen so much about our politics, health, and science change since this blog was last updated in mid 2019. The show in its former interview-heavy form had to peter out as Stevie and I turned towards finishing experiments, writing papers, and graduating. Fortunately, we both completed our PhDs over subsequent years and have since moved on to various life ventures.

For one, I need to update you on me: though you may have known me differently, I’m Frances, a physicist at the Princeton Plasma Physics Lab (PPPL) and still as avid a science communicator as I have the bandwidth to be. The more I come out to the world as trans, the more important it is to connect the dots in every venue — so now my new name is connected to this website and a body of work I have loved producing. Nothing is changing about my intention to produce more science on the radio.

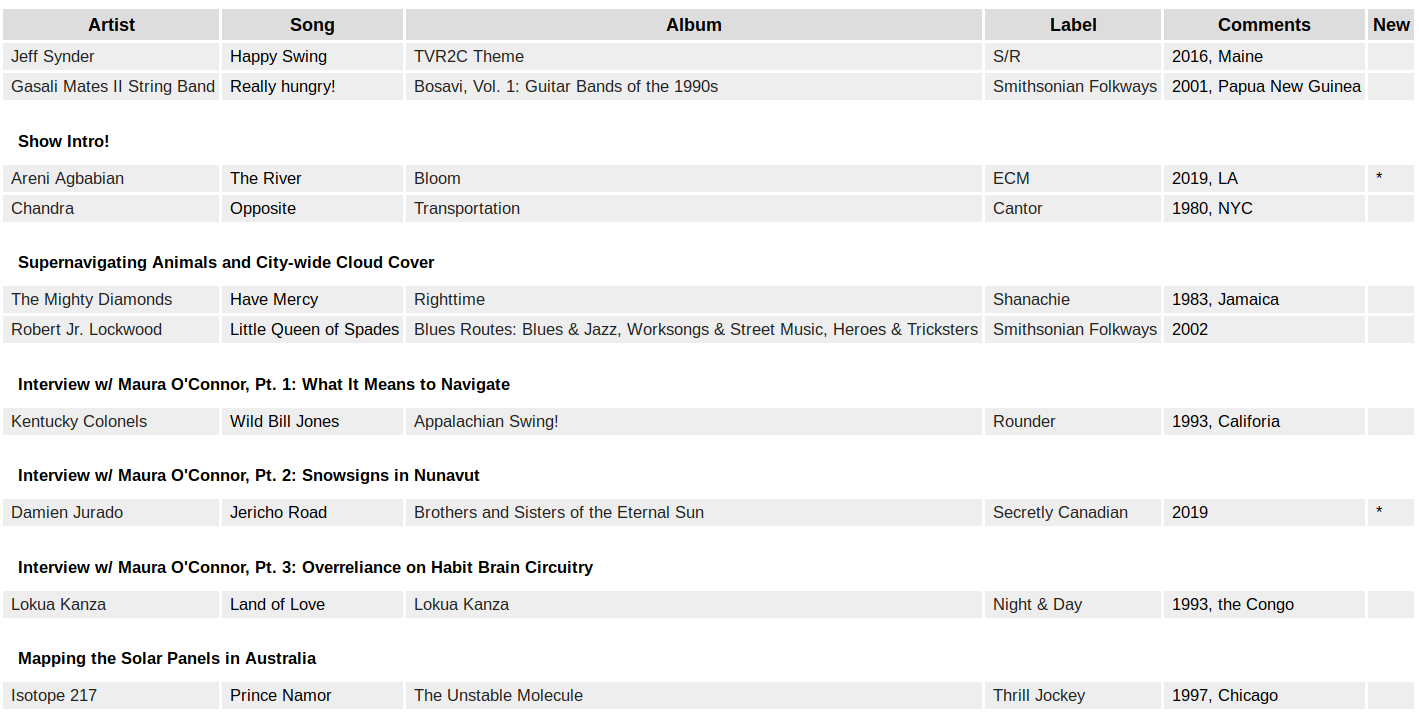

That being said, TVR2C has not gone away, though its form has changed substantially! Over the summers of 2021 and 2022, I led a weekly (later bi-weekly) WPRB program under this name, These Vibes Are Too Cosmic, which left out interviews with scientists but distributed many science news stories over the span of the show. Rewarding as this format has become, I would hope that future work brings back talking with real scientists (not just my voice!) and that I have time to come back summer after summer to WPRB 103.3 FM.

Stevie has made plenty of totally unique strides herself, pushing the world forward in her own way. She has changed fields from physics to AI ethics and tech policy. Currently, she is a Senior Ethics Research Scientist at Deepmind. Somehow or another, we’ll both be involved in any future directions These Vibes takes.

Thank you all for your continued listening, feedback, and support!

Cheers,

Frances, Staff Research Physicist at Princeton Plasma Physics Laboratory and DJ at WPRB